.

Recent Work

VIEW

VIEW

(Current) Project

Model Chess Engine with NNUE Evaluation

Inspired by and trained on Stockfish-16, this project is a model chess engine utilizing minimax search with alpha-beta pruning and an NNUE (Yu Nasu, 2018) architecture as the evaluation function.

VIEW

VIEW

(Current) Article

An Intuitive Look Into Normalizing Flows Architectures

A presentation of the intuition behind normalizing flows and their use in implementing modern architectures such as NICE (Dinh et al., 2015) and GLOW (Kingma and Dhariwal, 2018).

VIEW

VIEW

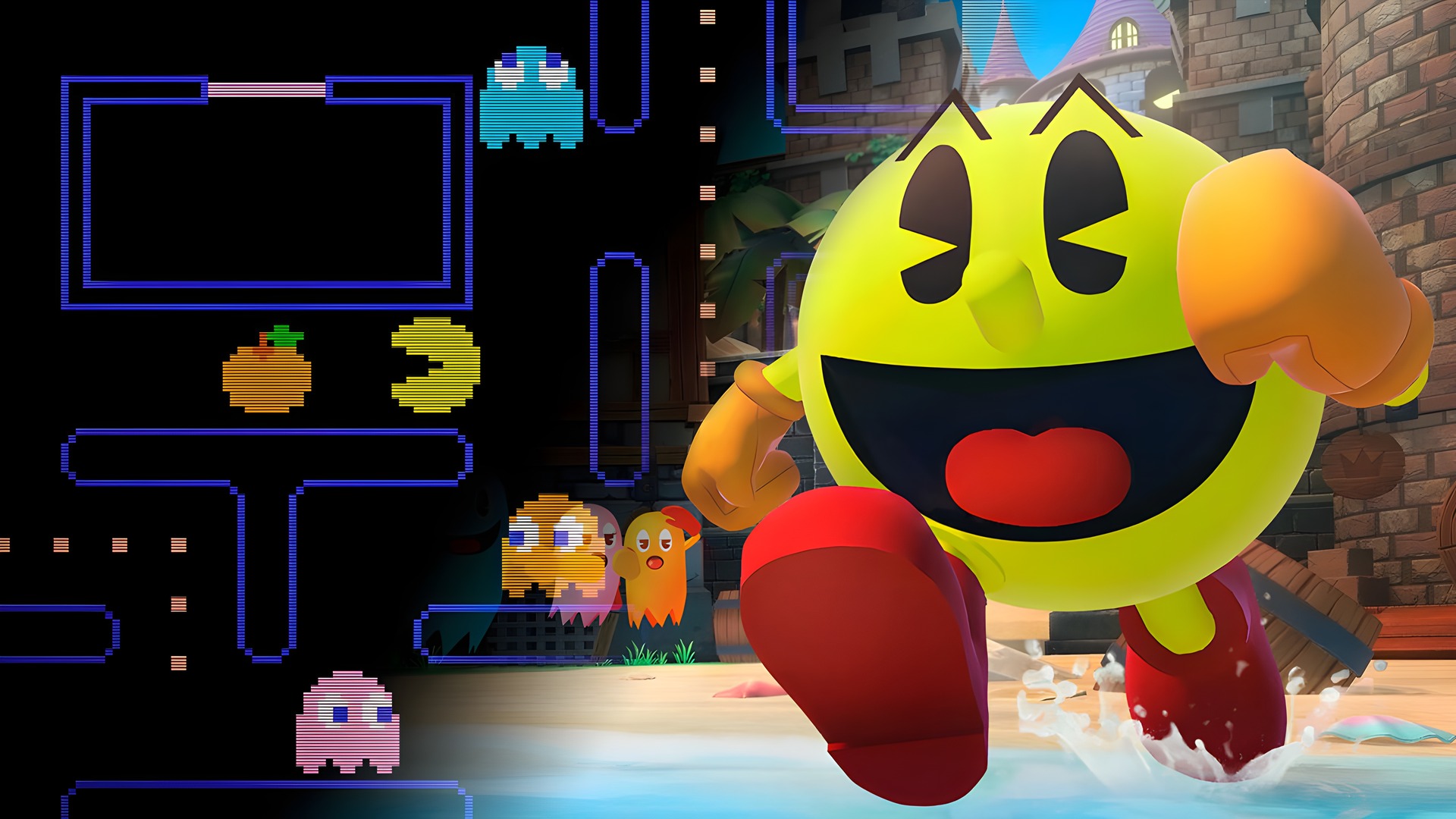

Project

Reinforcement Learning Pacman AI

A single-agent reinforcement learning project utilizing a Dueling Deep Q-Network (DDQN) architecture to play Atari's "Ms. Pacman" in OpenAI's Gym. An epsilon-greedy strategy is used for balancing exploration and exploitation.

VIEW

VIEW

Project

Spotify Color Sorter

A locally run application to sort personal Spotify playlists by song album cover colors. Utilizes the Spotify Web API and OAuth 2.0 system to securely access only user playlist data. Does not track any data.

VIEW

VIEW

Project

Kaggle Contest - Store Sales Time Series Forecasting

A "sequence-to-one" Transformer model based on the "Attention is All You Need" (Vaswani et al., 2017) architecture for forecasting retail sales time series at Corporacion Favorita, an Ecuadorian retailer.

VIEW

VIEW

(MOCK) RESEARCH PAPER

LSTMs vs Transformers for Time Series Forecasting

This mock research paper is an investigation into comparing the effectiveness of Long Short-Term Memory (LSTM) and Transformer models for time series forecasting.